Romain Sestier · · 10 min

Romain Sestier · · 10 min ![120+ Agentic AI Tools Mapped Across 11 Categories [2026]](/_astro/ai-agent-tools-landscape-2026.D9-zOF-k.png)

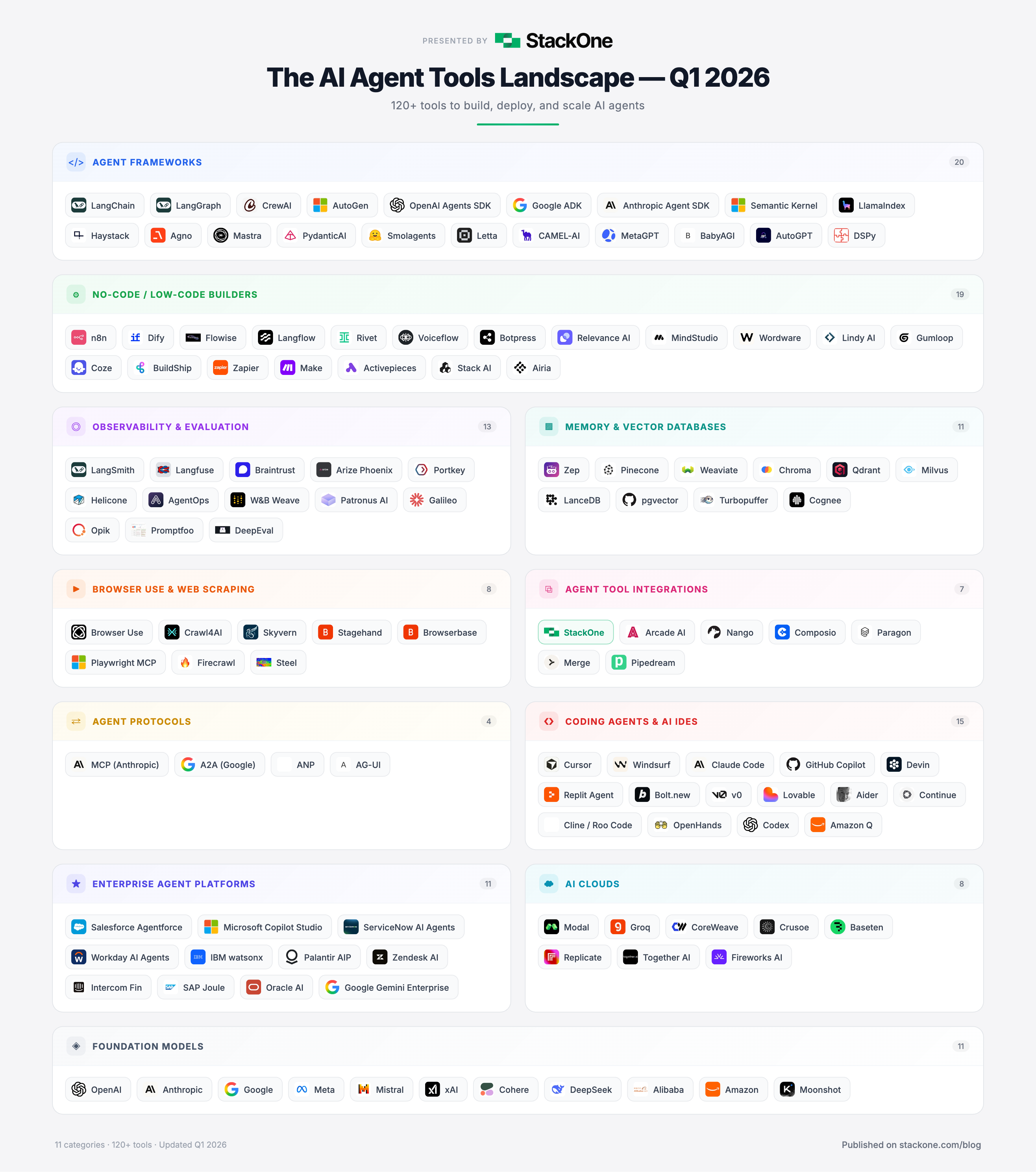

120+ Agentic AI Tools Mapped Across 11 Categories [2026]

Table of Contents

Last updated: Q1 2026 · This post is maintained and updated quarterly.

The AI agent landscape is evolving faster than any technology category tracked historically. Six months ago, “AI agents” was still buzzword territory; today it represents a category with over 120 agentic AI tools competing for developer attention.

As CEO of StackOne, I spend my days at the intersection of AI agents and enterprise software, building the platform for deepest action coverage across agents. That’s 10,000+ actions spanning 200+ connectors, providing a front-row perspective on adoption patterns, hype, and emerging essentials.

This post maps the entire agentic AI landscape as of early 2026: 120+ tools across 11 categories, from code-first frameworks to enterprise platforms to foundation models powering everything. It serves as a reference guide for developers selecting agentic stacks, founders scoping competitive landscapes, or enterprise leaders planning agent strategy.

What Are Agentic AI Tools?

Agentic AI tools are software frameworks, platforms, and infrastructure that enable AI systems to act autonomously — reasoning through tasks, calling external APIs, and making decisions without constant human oversight. As of Q1 2026, the landscape comprises over 120 production-ready tools across 11 categories, from code-first frameworks to enterprise platforms to the foundation models powering everything.

Table of Contents

- What Are Agentic AI Tools?

- The AI Agent Tools Landscape Map

- How the AI Agent Stack Works in 2026

- 1. AI Agent Frameworks (Code-First)

- 2. No-Code / Low-Code AI Agent Builders

- 3. AI Agent Observability & Evaluation Tools

- 4. AI Agent Memory & Vector Databases

- 5. AI Agent Tool Integrations & Infrastructure

- 6. Browser Use & Web Scraping Tools

- 7. AI Agent Protocols (MCP & A2A)

- 8. AI Coding Agents & AI IDEs

- 9. Enterprise AI Agent Platforms

- 10. AI Clouds & Inference Platforms

- 11. Foundation Models Powering AI Agents

- What Comes Next

The AI Agent Tools Landscape Map

Below is a map of the full AI agent landscape — 120+ tools across 11 categories. Click to view full-size version.

This AI agent tools landscape captures the ecosystem state as of early 2026. Each category is explored in detail below.

How the AI Agent Stack Works in 2026

The AI agent ecosystem in 2026 breaks into 11 distinct layers, each solving different challenges:

- Models form the foundation

- AI agent frameworks provide orchestration

- No-code builders democratize access

- Memory and vector databases enable persistence

- Observability tools maintain reliability

- Tool integrations and protocols connect to the real world

- Coding agents transform software development

- Enterprise platforms bring everything to production scale

These layers deeply interconnect — winners integrate best with the others.

1. AI Agent Frameworks (Code-First)

AI agent frameworks are foundational libraries and SDKs that developers use to build, orchestrate, and deploy autonomous AI agents in code.

This foundation layer provides the libraries and SDKs for building agents in code. The most striking 2026 development: every major AI lab now has its own agent framework. OpenAI has the Agents SDK (evolved from Swarm), Google released ADK, Anthropic shipped the Agent SDK, Microsoft has Semantic Kernel and AutoGen, and HuggingFace built Smolagents. This signals where the industry believes value creation will concentrate.

LangChain remains dominant at 126k GitHub stars, but architectural momentum shifts toward graph-based orchestration. LangGraph (24k stars) and Google ADK (17k stars) both embrace directed graphs for stateful, multi-agent workflows — moving beyond simple chain-based patterns defining 2024.

AI Agent Frameworks to Know in 2026

- LangChain (126k stars) — Foundational library; most Python agent builders interact with it

- AutoGen (54k stars) — Microsoft’s conversation-driven multi-agent framework

- LlamaIndex (47k stars) — Data framework with 160+ connectors for RAG and agent workflows

- CrewAI (44k stars) — Role-based multi-agent teams; 60%+ Fortune 500 adoption

- Semantic Kernel (27k stars) — Microsoft’s enterprise SDK; optimal for .NET teams

- Agno (26k stars) — High-performance multi-modal agent runtime

- Smolagents (25k stars) — HuggingFace’s code-first library; agents write Python, not JSON

- LangGraph (24k stars) — Graph-based orchestration for stateful, multi-agent workflows

- Haystack (23k stars) — deepset’s production-ready orchestration framework

- OpenAI Agents SDK (19k stars) — Lightweight, production-ready evolution from Swarm

- Mastra (19k stars) — TypeScript-first from Gatsby team; 300k+ weekly npm downloads

- Google ADK (17k stars) — Code-first toolkit optimized for Gemini, works with any model

- PydanticAI (15k stars) — “FastAPI feeling” for agents; type-safe and clean

- Letta (15k stars) — Formerly MemGPT; stateful agents with long-term memory

- Anthropic Agent SDK (4.6k stars) — Build agents with Claude; custom tools and hooks

- AutoGPT (170k stars) — Pioneered autonomous agent concept; goal-driven task execution

- DSPy (23k stars) — Stanford NLP’s framework for programming LMs; built-in agent loops and ReAct patterns

- CAMEL-AI (18k stars) — Multi-agent role-playing framework; early agent collaboration via structured conversations

- BabyAGI (20k stars) — Pioneered task-driven autonomous agent pattern; creates, prioritizes, executes tasks in loops

Which AI Agent Framework Should You Choose?

For most teams in 2026, LangGraph leads for complex Python multi-agent orchestration, Mastra for TypeScript teams, and CrewAI for rapid role-based agent prototyping. If committed to specific model providers, lab-specific SDKs (OpenAI Agents SDK, Google ADK, Anthropic Agent SDK) offer the tightest integration.

2. No-Code / Low-Code AI Agent Builders

No-code and low-code AI agent builders let non-developers create sophisticated AI agents through visual interfaces and natural language, without writing code.

The democratization of AI agent builder tools is accelerating. Faster than predicted. The standout is n8n with 150k+ GitHub stars, becoming the de facto “action layer” for AI agents. Its AI Workflow Builder lets you describe workflows in plain English; the self-hostable model appeals to data-control-conscious teams.

Natural language workflow creation is now standard. Nearly every platform — from Gumloop to Lindy AI to Zapier Agents — lets you describe what you want and generates automation. The builder/no-builder distinction is blurring.

No-Code AI Agent Builders to Know

- n8n (150k+ stars) — Visual workflow automation with native AI nodes; self-hostable

- Dify (114k+ stars) — Open-source LLMOps with visual workflow builder

- Flowise (30k+ stars) — Drag-and-drop AI agents built on LangChain

- Langflow — Open-source low-code builder for agentic and RAG apps

- Rivet — Visual AI programming environment by Ironclad

- Voiceflow — Build AI chat and voice agents without code

- Lindy AI — Build “AI employees” in plain English; 5,000+ integrations

- Wordware — Natural language as programming language; #1 Product Hunt launch ever

- Make / Zapier / Activepieces — Workflow automation with native AI agent capabilities; Activepieces is MIT-licensed open-source alternative

- Workato — Enterprise automation platform; 1,200+ connectors, AI-powered recipe builder; now part of IBM

- Tray.ai — Universal automation cloud with AI capabilities; visual drag-and-drop for complex enterprise workflows

- Airia — Enterprise AI orchestration with no-code agent builder; model-agnostic with built-in AI governance

- Gumloop — AI-powered workflow automation; visual pipeline builder for complex agent workflows

- MindStudio — No-code AI agent platform; build and deploy custom AI apps without code

- Coze — ByteDance’s AI agent builder; plugin ecosystem with generous free tier

- BuildShip — Visual backend builder for AI workflows; ships API endpoints and scheduled tasks

The pricing model shift matters: most platforms moved from per-seat to credit-based or execution-based pricing. This aligns better with bursty, unpredictable agent workloads. Expect this trend to accelerate.

3. AI Agent Observability & Evaluation Tools

AI agent observability and evaluation tools provide monitoring, tracing, and testing infrastructure needed to run agents reliably in production.

You cannot improve what you cannot measure — and as agents move into production, observability became non-negotiable. Category validation arrived January 2026 when Langfuse was acquired by ClickHouse. With 2,000+ paying customers, 26M+ SDK monthly installs, and 19 of the Fortune 50 as clients, Langfuse proved open-source LLM observability is real business.

Portkey tells another scale story: 10B+ monthly requests through its AI gateway, 99.9999% uptime, sub-10ms latency. When agent infrastructure must match database reliability, Portkey answers that need.

AI Agent Observability and Evaluation Tools

- LangSmith — Full-lifecycle observability by LangChain; works with any framework

- Langfuse — Open-source; acquired by ClickHouse; 19 Fortune 50 clients

- Braintrust — Evals and monitoring; trusted by Notion, Stripe, Vercel

- Arize Phoenix — Open-source on OpenTelemetry; framework-agnostic

- Portkey — AI gateway; 10B+ requests/month; 40+ pre-built guardrails

- Helicone — Open-source LLM observability; Rust-based high-throughput gateway

- AgentOps — Session replays and failure detection; two lines of code to start

- Weights & Biases Weave — LLM observability from MLOps leaders; trace, evaluate, iterate on workflows

- Patronus AI — Enterprise AI evaluation; automated testing, monitoring, hallucination detection

- Galileo — LLM evaluation and observability; real-time hallucination detection and guardrails

- Opik — Open-source LLM evaluation by Comet; experiment tracking and agent tracing

An emerging sub-category: agent-specific testing, driven by the reality that AI agent tools fail in ways traditional testing misses. Tools like Promptfoo (red teaming and vulnerability scanning), Ragas (RAG evaluation), and DeepEval (pytest-like LLM testing) bring software engineering discipline to agent development. Growth expected as agents tackle higher-stakes tasks.

4. AI Agent Memory & Vector Databases

AI agent memory and vector databases enable agents to persist knowledge, learn from past interactions, and retrieve relevant context at scale.

Memory separates toy demos from truly useful agents. Without it, every interaction starts at zero. With it, agents learn, adapt, and build context over time. Two companies take very different approaches.

Mem0 raised $24M and became exclusive memory provider for AWS’s Agent SDK. Their approach: self-improving memory supporting episodic, semantic, procedural, and associative types — achieving 26% accuracy boost over OpenAI’s baseline.

Zep pursued a temporal knowledge graph approach, offering 18.5% accuracy improvement and 90% latency reduction versus standard baselines. Their open-source Graphiti library became go-to for time-aware memory needs.

Vector Databases for AI Agents

- Pinecone — Fully managed; scales to billions of vectors; enterprise default

- Weaviate — Open-source with native multi-tenancy and hybrid search

- Chroma — Lightweight, open-source; great for prototyping and smaller workloads

- Qdrant — High-performance, Rust-based; GPU-accelerated indexing on 2026 roadmap

- Milvus — CNCF graduated project; billion-scale similarity search

- pgvector — Already on Postgres? Start here; zero additional infrastructure

- LanceDB — Open-source embedded vector database on Lance columnar format; serverless

- Snowflake Cortex — Vector search built into Snowflake; no separate vector DB if data is already there

- Azure AI Search — Microsoft’s managed vector search; native integration with Azure OpenAI and Semantic Kernel

- Amazon Bedrock Knowledge Bases — AWS’s managed RAG service with built-in vector storage; tight Bedrock integration

Prediction: dedicated agent memory layers will become standard infrastructure in 2026, much as vector databases became standard in 2024. Agents that remember are agents that win.

5. AI Agent Tool Integrations & Infrastructure

AI agent tool integrations connect agents to external software — CRMs like Salesforce, HRIS platforms like Workday, ticketing systems like Zendesk — enabling real-world actions.

This is where agents meet reality. An agent reasoning but unable to act is merely a chatbot. The tool integration layer transforms LLMs into systems that read CRMs, update tickets, and trigger workflows.

The category bifurcates: broad horizontal platforms covering many apps with pre-built connectors, and developer-first tools providing building blocks for custom integrations.

AI Agent Integration Platforms

- StackOne — Full disclosure: my company. Backed by Google Ventures and Workday Ventures with $24M total funding. Provides deepest action coverage for AI agents — 10,000+ pre-built actions across 200+ connectors with managed auth and compliance (SOC 2, GDPR, HIPAA). MCP-compatible. Built specifically for agentic integration infrastructure. Access HubSpot, Workday, Salesforce, and 200+ SaaS apps. AI Integration Builder extends coverage to any system or API without pre-built connector. MCP and A2A compatible.

- Arcade AI — Focused on agent auth and secure credential management; credentials never exposed to LLM; raised $12M seed

- Nango — Developer infrastructure for custom API integrations; powers Replit and Exa; built-in MCP server

- Pipedream — Low-code workflow automation connecting 2,700+ APIs; acquired by Workday November 2025 — signal of enterprise agent infrastructure direction

- Composio (27k stars) — Open-source tool integration layer for AI agents; app connectors with auth management; growing community

- Paragon — Embedded integration infrastructure with ActionKit for AI agents; 130+ connectors; MCP server support

- Merge — Unified API provider covering HRIS, ATS, and CRM integrations; built pre-agentic era; lacks real-time, bidirectional capabilities agents require

The Rise of Agentic Integration Infrastructure

As AI agents move to production, a new category emerges: agentic integration infrastructure designed specifically for agent-to-application connectivity. Unlike traditional iPaaS (built for human-triggered workflows), agentic infrastructure handles unique agent challenges — dynamic tool discovery, managed authentication across hundreds of apps, and compliance requirements (SOC 2, GDPR, HIPAA) enterprise deployments demand.

This is StackOne’s position: deepest action coverage — 10,000+ pre-built actions across 200+ connectors — with managed auth and full compliance. Whether agents run on CrewAI, LangGraph, OpenAI Agents SDK, Anthropic SDK, or Vercel AI SDK, connectors provide access to enterprise software they need to be useful.

The Pipedream acquisition matters deeply. Workday, a $60B+ enterprise software company, bought an API integration platform specifically for AI agent strategy. That signals everything about where this category is heading.

6. Browser Use & Web Scraping Tools

Browser use and web scraping tools enable AI agents to navigate, interact with, and extract data from the web — automating browser-based workflows and gathering real-time information.

This category exploded 2025-2026. Browser Use went from zero to 78K GitHub stars in months — among the fastest-growing open-source projects ever. Crawl4AI at 51K stars became the default for feeding web content into LLMs. Common thread: agents need to see and interact with web like humans do.

The category spans browser automation frameworks, managed browser infrastructure, and AI-native crawlers:

- Browser Use (78K stars) — Dominant browser agent framework; 89% success rate on WebVoyager benchmark; works with any LLM

- Crawl4AI (51K stars) — LLM-friendly web crawler outputting clean markdown; 4x faster than competitors; Apache 2.0

- Skyvern (20K stars) — Uses Vision-LLMs understanding web pages via screenshots, not DOM parsing; YC-backed

- Stagehand (21K stars) — AI browser automation by Browserbase; self-healing actions with multi-language SDKs

- Browserbase — Browser-as-a-service for AI agents; managed cloud browsers with session replay and anti-detection

- Playwright MCP (16K stars) — Microsoft’s MCP server for browser automation; uses accessibility snapshots for 10-100x faster interaction than vision approaches

- Firecrawl — Turn any website into LLM-ready data; used by major AI companies for web data extraction

- Steel (6K stars) — Open-source headless browser API; self-hostable with built-in proxy support and anti-detection

The architectural debate: vision-based approaches (Skyvern — screenshot + Vision-LLM) versus DOM/accessibility-based approaches (Playwright MCP — structured data). Vision is more UI-change-robust; DOM-based is faster and cheaper. Both will coexist, but browser automation is becoming essential infrastructure for agents needing web interaction.

7. AI Agent Protocols (MCP & A2A)

AI agent protocols are open standards defining how agents communicate with tools (MCP) and with each other (A2A), enabling ecosystem interoperability.

Every platform shift needs standards. 2026 is shaping as the year agent protocols go mainstream. Three complementary protocols now define the agent communication stack.

MCP (Model Context Protocol) is winning the tools and data integration layer. Originally created by Anthropic November 2024, MCP was donated to the Linux Foundation’s Agentic AI Foundation December 2025, co-founded with Block and OpenAI. It is now the standard way LLMs connect to external tools and data sources — with 75+ connectors in Claude alone. MCP support became table stakes for any agent platform.

A2A (Agent-to-Agent) solves a different problem: how agents talk to each other. Google’s protocol, also donated to the Linux Foundation, now has 150+ supporting organizations and recently added gRPC support. IBM’s ACP (Agent Communication Protocol) merged into A2A early 2026, consolidating agent-to-agent communication.

AG-UI (Agent-User Interaction Protocol) by CopilotKit tackles the third leg: how agents communicate with frontends and human users. It defines standards for streaming agent state, tool execution, and user interactions to UI components — bridging backend agents and user-facing applications.

Thinking about it: MCP is how agents use tools. A2A is how agents collaborate. AG-UI is how agents talk to users. All three are essential. If building agent infrastructure today, support all three.

8. AI Coding Agents & AI IDEs

AI coding agents and AI-powered IDEs are autonomous or semi-autonomous tools that write, review, debug, and deploy code — representing one of agentic AI’s most tangible applications.

This agentic AI coding tools category is most visible — and numbers are staggering. Per Anthropic, Claude Code now accounts for 4% of all GitHub public commits, with projections of 20%+ by end 2026. A meaningful fraction of global code is written by an AI agent in a terminal.

Devin’s $73M ARR proves autonomous coding agents are real market, not demo. Lovable at $75M ARR with 30,000+ paying users shows appetite for no-code AI app building is enormous.

Agentic AI Coding Tools Compared:

- Cursor — The AI-native IDE (VS Code fork); used by most Fortune 500 dev teams; indexes entire codebases

- Claude Code — 4% of GitHub commits; agent teams feature for multi-agent coordination

- GitHub Copilot — Agent mode auto-iterates and self-heals; supports Claude 4.5 and Gemini 3 Ultra on Enterprise

- Windsurf — Agentic IDE with multi-file reasoning and repository-scale comprehension

- Devin — First “AI software engineer”; $73M ARR; full SDLC automation

- OpenHands (65k stars) — Open-source; solves 87% of bug tickets same day; 50%+ on SWE-bench

- Bolt.new / v0 / Lovable — AI-powered app builders; Lovable at $75M ARR; Bolt.new has 1M+ AI-generated sites

- Aider / Continue / Cline / Roo Code — Open-source CLI and IDE agents; Cline has 5M+ installs

- OpenAI Codex — Cloud-based coding agent; GPT-5.3-Codex holds state-of-the-art on SWE-Bench Pro; open-source CLI at 59k+ stars

- Amazon Q Developer — AWS’s AI coding assistant; 66% on SWE-Bench Verified; saved Amazon 4,500 developer-years internally

- Replit Agent — Build full-stack applications from natural language descriptions; integrated development with one-click deployment

The pattern: coding agents bifurcate into “copilot” mode (Cursor, Copilot, Continue — augmenting human developers) and “autopilot” mode (Devin, OpenHands, Claude Code agent teams — working autonomously). Both coexist, but autopilot agents drive growth.

9. Enterprise AI Agent Platforms

Enterprise AI agent platforms are production-grade systems from major software vendors deploying AI agents across customer service, HR, finance, and operations at scale.

This is where the big money lives. Per Salesforce’s latest earnings, Salesforce Agentforce reached $540M+ ARR with 18,500 customers — the fastest-growing Salesforce product ever. Every major enterprise vendor now has an agent strategy, deploying them at a pace previously unthinkable.

Top Tools for Building Enterprise AI Agents:

- Salesforce Agentforce — $540M+ ARR; 18,500 customers; hybrid reasoning agents across CRM, sales, service, commerce

- Microsoft Copilot Studio — Build and deploy agents inside Teams and M365; unified governance through Microsoft Agent 365

- ServiceNow AI Agents — End-to-end IT, HR, customer workflows; AI Control Tower for centralized agent management; strategic OpenAI partnership

- Workday AI Agents — HR and finance agents; Frontline Agent cuts manager staffing time by 90%

- IBM watsonx Orchestrate — 100+ domain-specific agents, 400+ prebuilt tools, Agent Catalog

- Oracle AI Agents — AI Agent Platform plus Agent Studio for Fusion Cloud apps; in-database agent execution

- SAP Joule — Collaborative AI agents across business functions; Joule Studio agent builder GA Q1 2026

- Palantir AIP — Ontology-powered; “Agentic AI Hives” for autonomous supply chain and logistics

- Zendesk AI — AI agents for customer service; automated resolution across email, chat, messaging channels

Enterprise adoption patterns vary widely. Some companies start customer-facing (Salesforce, ServiceNow, Zendesk lead here) while others begin with internal operations (Workday, SAP, Oracle automating back-office first). No single playbook exists. Winners connect agents across both domains — customer-facing and internal — into unified agentic layers.

10. AI Clouds & Inference Platforms

AI clouds and inference platforms provide specialized compute infrastructure — GPU clusters, optimized runtimes, serverless endpoints — powering model training and inference for AI agents at scale.

The AI infrastructure layer exploded as GPU demand far outstrips supply. A new AI-native cloud provider category emerged, purpose-built for unique model training and inference demands — growing faster than any other stack segment.

CoreWeave leads with a $23B+ valuation, offering GPU cloud optimized for AI workloads. Modal became the developer favorite for serverless GPU compute — deploy a function, get a GPU, pay per second. Groq’s custom LPU chips deliver sub-second inference latency, changing what’s possible for real-time agent interactions.

AI Inference Platforms

- Modal — Serverless cloud for AI/ML; GPU inference and training; pay-per-second pricing

- Groq — Ultra-fast LPU inference; sub-second latency for real-time agent use cases

- CoreWeave — GPU cloud infrastructure; $23B+ valuation; purpose-built for AI workloads

- Crusoe — Clean energy AI cloud; sustainable compute for training and inference

- Baseten — Model inference infrastructure with custom GPU clusters and auto-scaling

- Replicate — Run open-source ML models via API; one-line deployment for any model

- Together AI — Open-source model inference at scale; competitive pricing for popular models

- Fireworks AI — Fast generative AI inference platform with compound AI system support

- OpenRouter — Multi-provider model routing; access 200+ models through single API with automatic fallbacks

- LiteLLM — Open-source provider abstraction; unified API for 100+ LLMs with load balancing and cost tracking

For agent builders, inference provider choice directly impacts latency, cost, and user experience. The trend: multi-provider strategies — using OpenRouter or LiteLLM to route between providers based on cost, latency, and availability.

11. Foundation Models Powering AI Agents

Foundation models are the large language models powering AI agents — providing the reasoning, planning, and tool-use capabilities enabling autonomous action.

Every agent is powered by a foundation model, and competition here has never been fiercer. Two narratives define 2026: frontier capabilities and open-source momentum.

Closed-Source AI Models

- OpenAI — Operator for computer use; Deep Research for multi-step web research; new ChatGPT Agent combines everything into one autonomous agent

- Anthropic — 1M context; Computer Use in beta; Claude Code for agentic development; MCP as the tool integration standard

- Google DeepMind — Advanced thinking capabilities; Project Mariner for browser automation; Jules coding agent out of beta

- Mistral — Le Chat agents with free Gmail and Calendar hooks; 675B total parameters across latest models

- xAI — Grok models with real-time X data access; strong reasoning and function calling

- Cohere — Enterprise-focused LLMs; Command R+ optimized for RAG and tool use; strong multilingual support across 100+ languages

Open-Source AI Models

- Meta — 10M context window; Scout, Maverick, upcoming Behemoth; open-weight with commercial use; changes agent deployment economics entirely

- DeepSeek — Trained for approximately $6M; MIT licensed; competitive with GPT-4o on key benchmarks; the cost-efficiency story reshaping industry assumptions

- Google Gemma — Open models from Gemini research; runs on consumer hardware with 128K context and function calling

- Alibaba Qwen — Apache 2.0; 300M+ downloads, 100K+ derivative models on Hugging Face; Qwen-Coder scores well on SWE-Bench

The implication for agent builders: you no longer need to choose between capability and cost. Open-source models close gaps fast, and Llama 4’s 10M context window combined with low-cost self-hosting makes agentic workloads viable at previously prohibitive scales. But larger context windows don’t eliminate context management challenges. See why agents still fail at context engineering even with million-token budgets.

What Comes Next for the AI Agent Landscape

The AI agent landscape will look meaningfully different in six months. New categories emerge, consolidation accelerates, and top-category tools face challenges from unheard newcomers. That’s this space’s pace.

What remains constant: AI agents are moving from experimental to production, from single-task to multi-agent, and from demos to enterprise infrastructure. The question isn’t whether your organization will use AI agents — it’s which layer of the stack you’ll build on, and how fast you can get there.

If building AI agents needing to take actions in enterprise software — HubSpot, Salesforce, Workday, SAP, or any of the 200+ connectors your organization runs — start building with StackOne. Backed by Google Ventures and Workday Ventures with $24M total funding, StackOne built the platform with deepest action coverage — 10,000+ actions across 200+ connectors — for this moment.

I’ll update this landscape quarterly. Follow me on LinkedIn for the next edition, and let me know what I missed in the comments.