Richard South · · 8 min

Richard South · · 8 min

Why AI Agents Are Changing the Integration Paradigm for B2B SaaS Platforms

Table of Contents

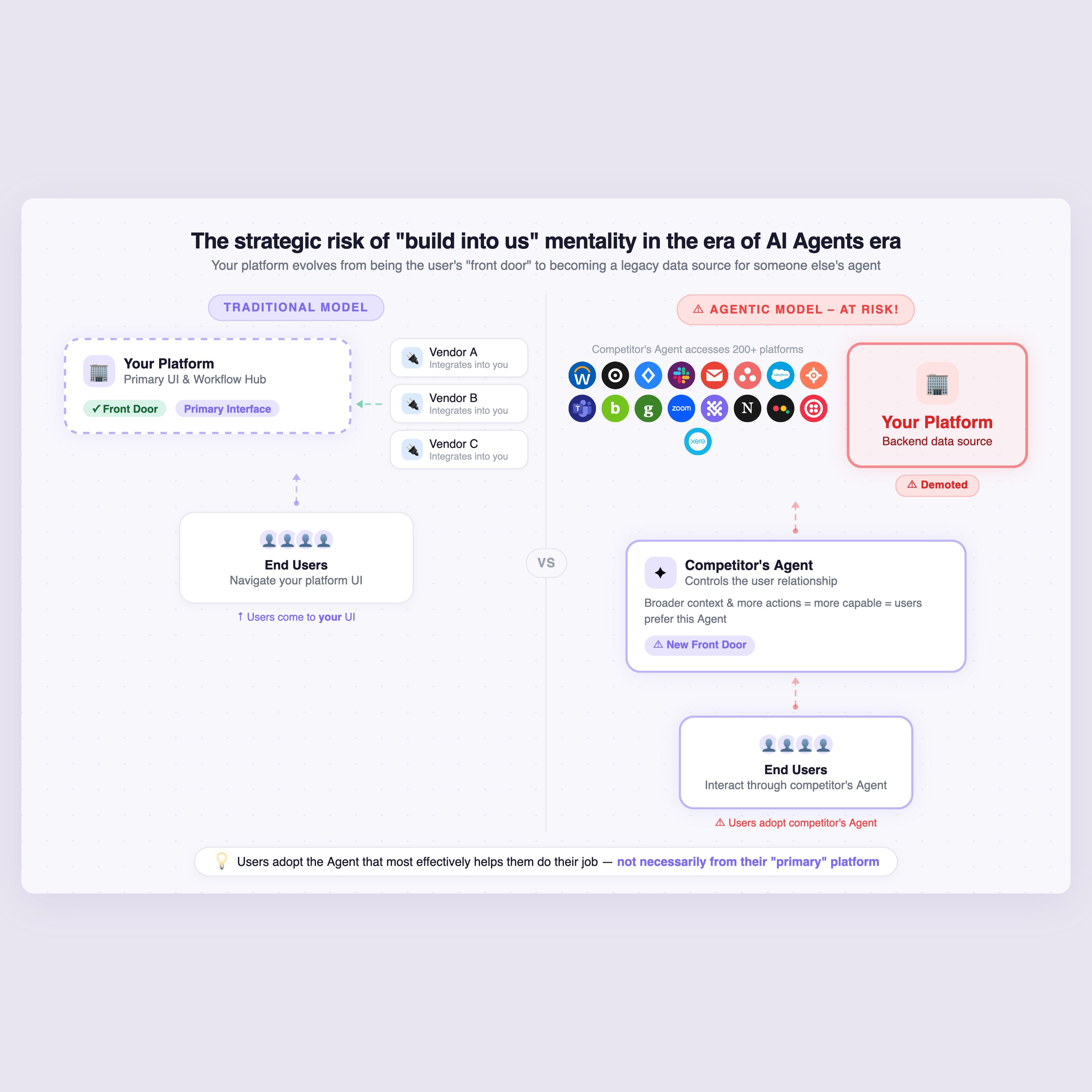

TL;DR: Traditional “build to us” integration strategies are becoming obsolete in the age of AI agents. Companies that don’t enable their Agents to connect outward risk becoming legacy data sources while competitors’ Agents become the primary user interface.

The Traditional Integration Model for System of Record Platforms

At StackOne, we’re lucky enough to be continually discussing agentic connectivity strategies with large “system-of-record” platforms across an array of different product categories — HRIS, CRM, Marketing, Learning and many more.

These market-leading platforms have historically followed a clear integration strategy: build a robust API and let other vendors integrate into them. This approach made perfect sense. Their customers use hundreds or thousands of applications, and maintaining integrations to all of them would be an enormous engineering burden. Instead, these platforms focused on creating well-documented APIs and encouraged their ecosystem to build connections.

Most companies initially approach their AI Agent connectivity strategy with this same mindset. But this approach quickly breaks down in the Agent era.

Why the Old Paradigm Breaks Down for AI Agents

AI Agents Need Cross-Platform Context and Actions

To build a truly differentiated AI agent, B2B SaaS vendors need more than a chatbot interface on their own data. Third-party connectivity becomes essential because:

- Agents require broad context: Understanding user intent requires data from across the entire tech stack, not just one category-specific “system of record” (e.g. the HRIS)

- Example: a seemingly simple use case of off-boarding an employee might touch 8–10 different platforms, if not more.

- Agents must take cross-platform actions: Executing complex workflows means operating across multiple platforms simultaneously

- Natural language interfaces are becoming the front door: Users are shifting from navigating dozens of individual platform UIs to interacting through conversational interfaces that span their entire tech stack

Competitive Differentiation Shifts to the Backend

When users interact through natural language interfaces, the front-end experience becomes commoditized. The real differentiation lies in:

- The breadth of context the agent can access

- The scope of actions the agent can execute

- The agent’s ability to orchestrate complex workflows across multiple systems

Critical insight: End users will ultimately adopt the agent that most effectively helps them do their job — not necessarily the agent from their “primary” platform today.

For deeper analysis on this shift and how “the interface is being absorbed,” Nicolas Bustamante, CEO of Fintool, produced this excellent write up on “The Crumbling Workflow Moat”.

The Strategic Risk of “Build Into Us” in the Agent Era

Here’s the conundrum for platforms still pursuing the traditional integration model:

Since the same LLM capabilities are available to everyone, competitive advantage comes from the scope of context and actions available to an agent. If you’re encouraging other vendors to build into your platform — perhaps by offering an MCP server as your “new API for the marketplace” — you’re actually making their agents more capable and desirable than yours.

The Consequence: From Front Door to Legacy Data Source

As this dynamic plays out, market-leading platforms risk a fundamental shift in their relationship with users:

- Before: Your platform is the primary interface and workflow hub

- After: Your platform becomes a backend data source accessed through someone else’s agent

- Result: User stickiness and strategic control migrate to whoever controls the most capable agent

How StackOne Enables the New Agentic Integration Paradigm

B2B SaaS vendors chose the traditional “build to us” model for good reasons — scaling integrations across hundreds or thousands of platforms is genuinely challenging. StackOne helps innovative companies solve this challenge with:

Comprehensive Agentic Connectors

- Hundreds of pre-built connectors exposing deep actions across complex enterprise systems (Workday, Salesforce, Oracle, Jira, and more)

- Support for sophisticated, optimized agentic actions — not just basic CRUD operations

Protocol Flexibility for Future-Proofing

- Support for MCP (Model Context Protocol), AI SDK, A2A (Agent-to-Agent), and RPC protocols

- Enables both autonomous agent workflows and deterministic integrations

- Adapt to evolving standards without rebuilding your integration layer

AI Tooling for Scale

- Build integrations to niche platforms in your customers’ tech stacks with AI Builder

- Create competitive differentiation through comprehensive third-party connectivity

- Manage integrations through your existing development pipelines

Take Action: Start Building Your Agentic Integration Strategy

If you’re ready to ensure your platform remains central to your customers’ daily working patterns rather than evolving towards a backend data source leveraged by Agents built by someone else, you can:

- Get started with StackOne for free and explore our connector ecosystem

- Schedule a consultation to discuss your specific agentic integration strategy

FAQs

What is agentic connectivity? Agentic connectivity refers to the infrastructure that enables AI agents to access data and take actions across multiple platforms, allowing them to orchestrate complex workflows on behalf of users.

Why can’t platforms use the same integration strategy for AI agents as they did for traditional APIs? Traditional integration strategies focused on getting others to build into your platform. For AI agents, this makes competitors’ agents more capable than yours, shifting user loyalty away from your platform.

What is an MCP server? MCP (Model Context Protocol) is a standard for connecting AI agents to data sources and tools. While useful, simply offering an MCP server without building outbound connections puts your platform at strategic risk.

How does StackOne differ from traditional integration platforms? StackOne specifically focuses on agentic use cases, providing deep, highly optimized agentic action capabilities (not just data access) across hundreds of platforms, with support for multiple protocols including MCP, AI SDK, A2A, and RPC.